Need help in eliminating ghost corridors

Need help in eliminating ghost corridors

|

Hello, I am using RTABMap for mapping and localization for my robot. To give you some context, I am using a zed mini camera and Unitree L2 lidar. Now, I am using robot_localization pkg to fuse wheel odometry and zed odometry and get fused odometry information for the robot. I am feeding this to RTABMap. I am using front zed camera for extracting visual features, and lidar for building the occupancy map (2d).

I am only fusing wheel velocity values with zed odom's velocities as wheel odometry pose values weren't usable. The issue I have been having is this - During mapping phase, when I drive the robot into a long corridor, I keep building the 2d occupancy grid. Then, at the end of the corridor, I turn the robot 180 degree back. Now, the robot is facing the opposite direction of the corridor. However, sometimes I see a 'new' corridor being created on the map at a small angular deviation. Now, I drive the robot forward (after turning 180) assuming when I make the robot turn 180 again in the future, it should correct that weird ghost corridor rtabmap had created. Good thing is, I see this correction attempt many-a-times, however, my hypothesis as to what causes this issue in the first place is that rtabmap is trusting odometry values a LOT. Hence, the loop closures that maybe detected while driving back, may not be accepted because RGBD/OptimizerMaxError limit would have exceeded or something like that. Which makes rtabmap to continue mapping the corridor at that angle offset from the original corridor. In my experiments of driving the robot to the end of the corridor and back, in order to get a good map (with minimal streaks outside the corridor and such), after driving the robot from one end of the corridor to the other end, I turn the robot 180, I drive forward, I keep driving for a small distance, then turn the robot 180 again (essentially look back as how I approached the end of the corridor), and at this moment, I would see a loop closure attempt and it would most times succeed in correcting the angular deviation. Then, after I visually confirm the corridor is looking fine in the map, I turn another 180, and continue driving the robot towards my start point of the corridor. Again, in between, I stop, turn 180 to look back and hope that loop closure event if detected, should correct the corridor map. Is there a better approach of mapping long homogeneous corridors? I have tried with Reg/Strategy 0, 1, and 2. What worked for me best was using Reg/Strategy 1 or 2 with RGBD/OptimizeMaxError set to 5 (from default 3). However, my concern is - is relaxing RGBD/OptimizeMaxError value a bandaid solution to my problem? Is there a better way to map a corridor that such that I have a good map and also be able to register both ways of corridor features? Few things I can think of - using a back camera and using its stream along with front camera to do loop closure, and lidar to build the occupancy map. To be fair, I only care about rotating at the end of the corridor to capture visual features from both sides of the corridor because I want to be able to localize once I create a map. Otherwise, my navigation stack would freak out if I don't orient the robot in a way where it knows for certain where it is in the map. If I can use both front and back cameras for capturing visual features, I don't think it'll be a problem in localization. However, I am not certain as to how exactly I can go about doing that (assuming this is possible and I can get away with rotating at the end of the corridor). Any help will be greatly appreciated. These are some screenshots I think would be helpful to explain my problem visually - Reg/Strategy 0 ; RGBD/OptimizeMaxError 5  Reg/Strategy 1; RGBD/OptimizeMaxError 5  Reg/Strategy 2; RGBD/OptimizeMaxError 5  Thank you. |

|

Administrator

|

Is your lidar that 4D L2 Lidar? https://www.unitree.com/L2

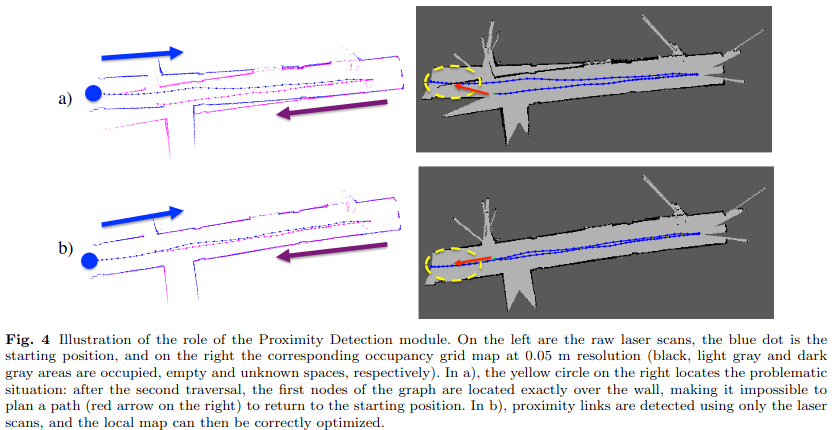

Are you using a 2D laser scan from your lidar or a 3D point cloud? The problem you have is described in that paper (section 3.3)  Because you have a lidar, you could enable RGBD/NeighborLinkRefining to correct your odometry (like in a setup like this one). With Reg/Strategy=1 and with proximity detection enabled (by default), the robot should be already able to detect the proximity loop closures with the lidar and correct the map without having to rotate the camera back to find a visual loop closure. If you are using the 4D L2 lidar, you may also consider doing lidar odometry to improve your odometry. Yes and no, if odometry covariance is very small, yes. In your case, maybe the covariance is underestimated (smaller than it should be). The robot seems drifting more in reality than the error set in the covariance. Ideally, we should try to keep RGBD/OptimizerMaxError to its default, but in some cases where we don't have control on how the odometry covariance is computed, we would increase that parameter to accept more loop closures. |

«

Return to Official RTAB-Map Forum

|

1 view|%1 views

| Free forum by Nabble | Edit this page |