Questions about a multi sensor setup

|

This post was updated on .

Hi,

I would like to experiment with a home built setup that I’m free to design as I wish. I have a precise idea of what I want but I’m not certain to understand what is doable within RTABMap, what isn’t but could be coded quickly, and what would require more long term feature requests. I would like to use several sensors and record large ros bags so that I can then try several solutions for odometry. Those sensors would be: - One 9dof IMU - Two monochromatic low res high fps cameras for VIO - Two high res low fps RGB cameras, one looking forward placed between the monochromatic cameras, and one looking backwards. This would be for mesh texturing and two-way loop closure detection - A Livox Mid-360 for LIO Calibration of the backward looking camera would be done wrt the Livox. Cameras would be hardware synced at say, 20Hz for the stereo cameras and 2Hz for the rgb cameras. Using all sensors, I would be interested in trying tightly coupled slams like FAST-LIVO2, or loosely coupling VIO and LIO by using both as separate odom links with different covariances. I have the following questions regarding the integration of RTABMap into the picture, using it as a SLAM backend. - is it possible to have cameras of different types inside one node ? If not, is it possible to have different cameras for different nodes ? In which case there could be one node for each monochromatic keyframe as well as one node for every rgb image. If none of those is possible then keeping a node only for rgb images can be a solution (hence discarding monochromatic images from the db). - in the case where it is possible to have nodes containing monochromatic stereo images and other containing rgb images of diametrically opposed cameras, is it possible to leverage stereo crosscheck for matching in the first case and use the images fully independently in the other case ? - for loop closures is it possible to compute the relative tf using the full LiDAR scans instead of the matched features ? (Edit: it is, registration options 1 or 2). If so, is it even possible to compute the tf by using local (in time not in space) submaps around each node, to ensure more covering between the two scans ? I hope those questions won’t seem too specific and that there is a solution for my project where I can use RTABMap as backend :) Thanks ! |

|

Administrator

|

Hi,

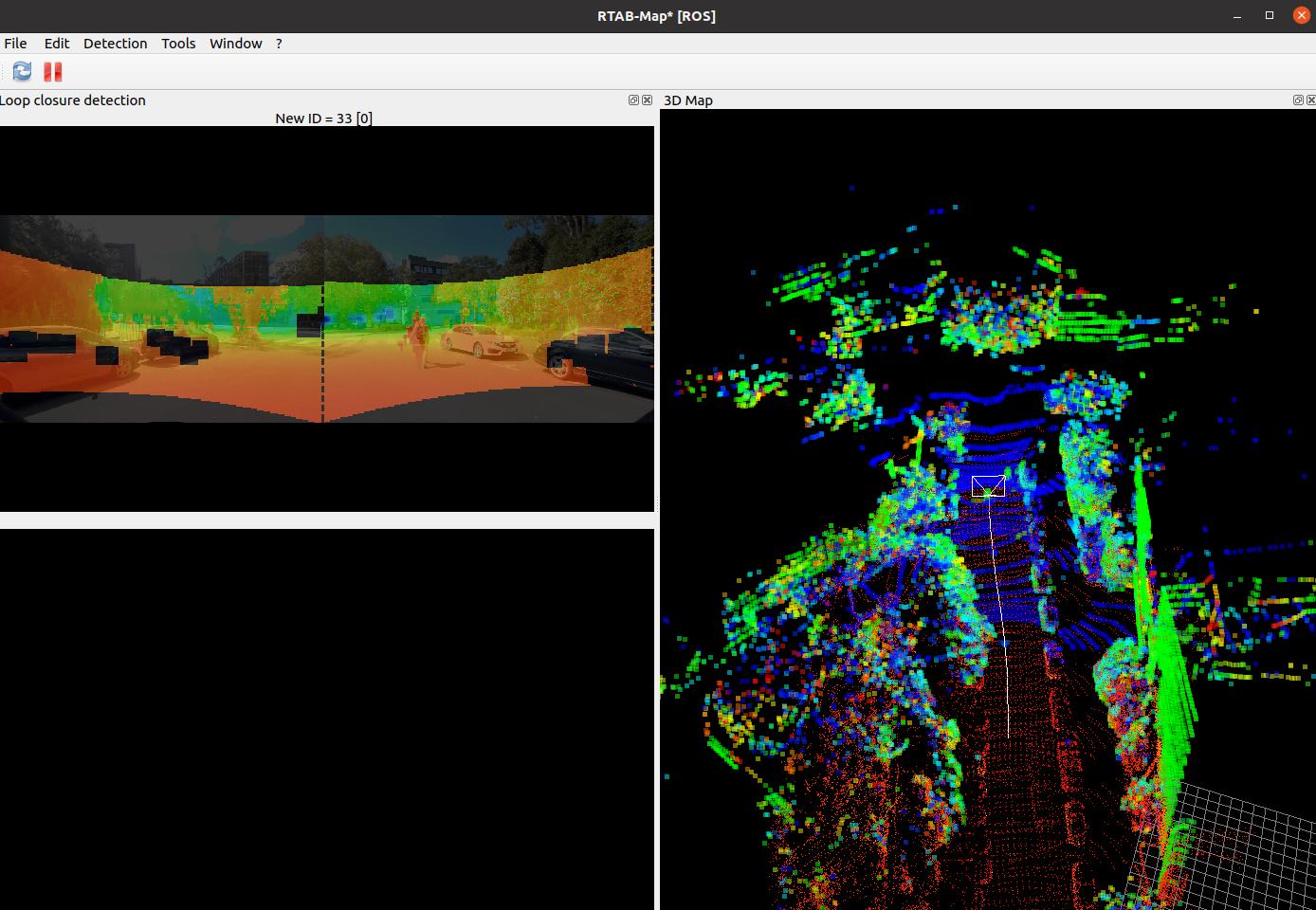

It is not possible to mix up monocular and stereo cameras directly. If all cameras have the same resolution, it could be possible to feed the stereo camera using its depth image instead of left/right (RGB-D input) and projecting the lidar in monocular cameras to generate two other RGB-D inputs. That would give three compatible RGB-D inputs (or three grayscale-Depth inputs), but they should have all the same resolution. If you have an upstream node that switch input data from the stereo camera and the RGB cameras, it could be possible to add interleaved nodes in the database (1 stereo frame, then 2 monocular RGB, then 1 stereo frame, and so on). You would need to adjust the frame rate at which you want nodes in that exernal node and set Rtabmap/DetectionRate at 0. Note that my feeling is that you could start with the two RGB monocular cameras + lidar setup. With monocular cameras, it is possible to generate 3D features with "Mem/StereoFromMotion" parameter, but it is no super accurate. I would recommend to generate depth images for these monocular cameras with your lidar. Follow the launch files used in that demo (car with two monocular cameras and lidar), in particular these options to generate the depth images: <param if="$(arg cameras)" name="gen_depth" type="bool" value="true"/> <param if="$(arg cameras)" name="gen_depth_decimation" type="int" value="8"/> <param if="$(arg cameras)" name="gen_depth_fill_holes_size" type="int" value="10"/> <param if="$(arg cameras)" name="gen_depth_fill_holes_error" type="double" value="100"/> <param if="$(arg cameras)" name="gen_depth_fill_iterations" type="int" value="2"/>Note that we can also do the lidar projection externally using rtabmap_util/poincloud_to_depthimage, and then synchronize two RGB-D streams with the lidar. When integrating multiple sensors that are not synchronized, ideally set odom_sensor_sync:=true for rtabmap node. You won't be able to enable loop closure detection on only one type of camera or coloring the lidar with RGB frames only and not the stereo frames. Internally, rtabmap will treat all nodes the same. Yes, set "Reg/Strategy:=1". For submaps, if you use icp_odometry node, this is what it does. On rtabmap side, on proximity detections, when "RGBD/ProximityPathMaxNeighbors>1", it will compare the current scan against assembled scans (or submaps) around the closest node in the map. If you want the "current scan" to be a submap, you can use point_cloud_assembler node (with circular_buffer enabled). The database will be bigger and "RGBD/ProximityPathMaxNeighbors" could be set to 1 because all nodes will contain accumulated scans, assembling scans like this assumes that the input odometry is accurate enough during the time they are assembled (the back-end won't be able to correct errors in the assembled scans inside a single node afterwards). In summary, with the data you have, I would start with a setup similar to that demo (corresponding youtube video).  cheers, Mathieu |

|

Hi Mathieu,

Thank you for your answer :) I think that all things considered it might be better to keep a node only for RGB images. I hadn't realised that proximity loops allowed loops without visual cues though, which makes me wonder if the backward-looking cameras is really needed then. This leads to another question though : the rtabmap-detectMoreLoopClosures allows to detect more visual-based loops, and to close them using whatever registration strategy. Finding more global loops could then lead to new relevant pure scan-based proximity loops, but I don't know if there is a way to detect more proximity loops : is there one ? Last thing, I would like to compute VIO and LIO in parallel, and merge them into one fused odometry. Is there a way in RTAB to mix two sources of odometry, which would allow to have one flexible database, or do I need to externally mix them and feed one only odom topic ? Thanks a lot |

«

Return to Official RTAB-Map Forum

|

1 view|%1 views

| Free forum by Nabble | Edit this page |