Zed2i, Jetson Xavier and remote viewing

|

Hi

I installed all the necessary SDK, wrapper, etc to run a RTABMAP with ROS and ZED2i on a Jetson Xavier running Ubuntu 18.04.6 LTS. https://github.com/stereolabs/zed-ros-examples/blob/master/examples/zed_rtabmap_example/README.md However when launching zed_rtabmap.launch in the jetson board, the 3D map window of RTABMAP is kind frozen with some other desktop image. RVIZ is showing live camera, point cloud and robot position in space. I then modified the launch file not to launch rviz in the jetson board, then installed zed ros examples in a laptop, modified the export lines in bash to point to ROS master and modifiedf the launch to only start the rviz in laptop. I am able to see the topics published by the robot, but no live image in rviz in laptop and can see just point cloud. RTABMAP in the robot still shows the 3D map frozen. I am kinda stuck...tried many thing and read lot of info online, but still unable to run a 3D mapping of the environment and visualize it properly. |

|

Administrator

|

Hi,

The 3D rendering problem is known on the jetpack, and explained in this issue (if you want to read the 97 posts). There are workarounds like explained in this comment, but I didn't keep up with latest jetpack versions to see if the workarounds are still working. I hope with jetpack 5 (on ubuntu 20.04), the opengl rendering problem will be fixed. You don't need to launch rtabmapviz on the robot (it is better not to save computation time), if you are going to visualize on a laptop. We can launch rtabmapviz or rviz on laptop, though maybe more general to use rviz. When you say "just point cloud", which topic? zed point cloud? To debug topics not received on remote laptop, I generally do "rostopic hz /my/topic/name" on the robot and on the laptop to see if the topic is actually published by the robot and that the remote computer receives it. If topic is published on the robot but not received on the laptop, explicitly set the ROS_IP on both computers. When streaming images, it is better to test with ethernet than Wifi to avoid bandwidth problems. See also this page about remote mapping (contains zed examples) This page shows also other network issues that we can have. cheers, Mathieu |

|

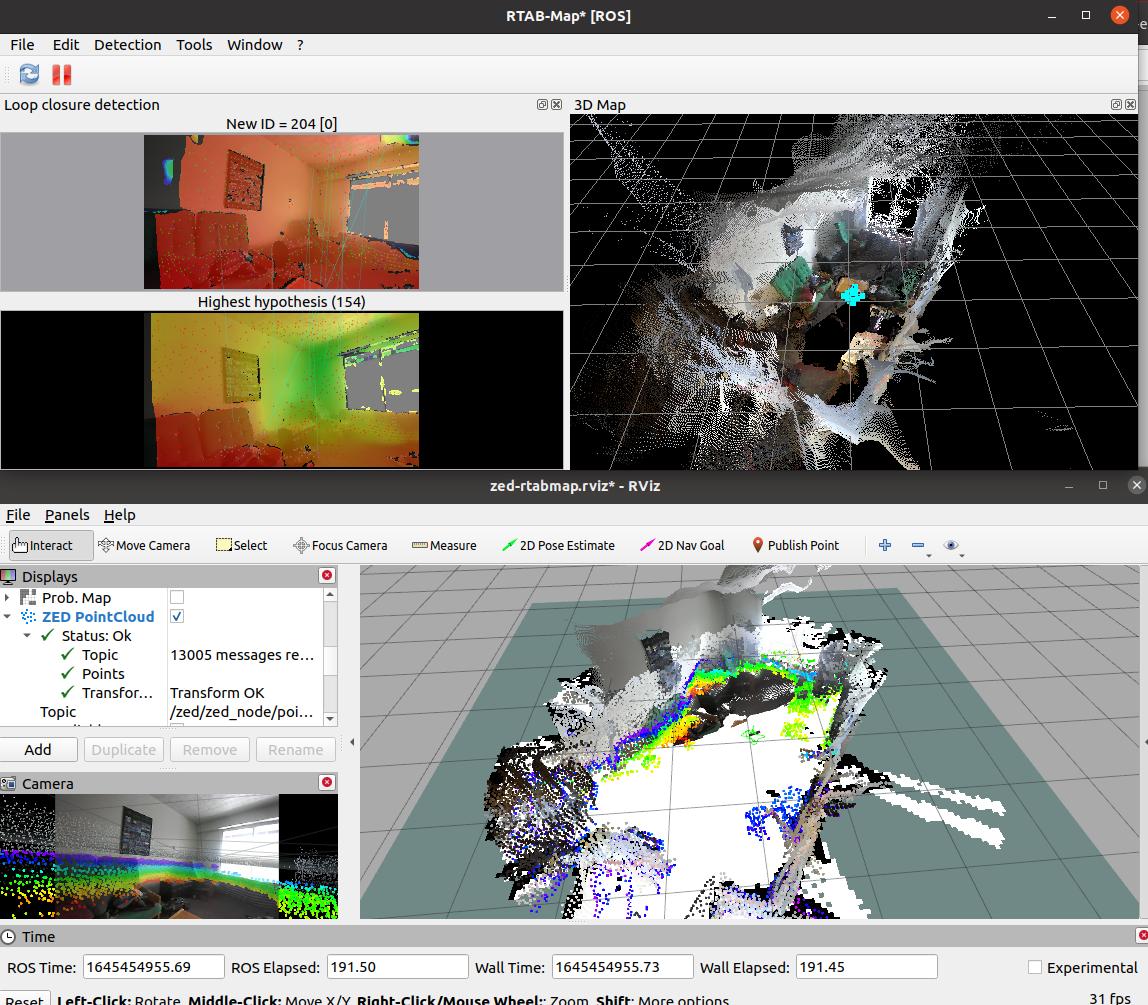

While I am trying to figure out the remote viewing part, I also tried to setup everything on a desktop PC with GTX1080TI card.

Using the official zed_rtabmap.launch, both rviz and rtabmap gui are shown. However, the point cloud is not fused (as you can see in the attached video). 20220210_164209.mp4 I also attach a zip of the entire zed rtabamap example. zed_rtabmap_example.zip I tried to set the parameter mapping/mapping_enabled to true, but in this case the 3d map window in RTABMAP gui is frozen and RVIZ window just shows camera position and some points, no fusion at all. Thank you |

|

Administrator

|

Hi,

just tried https://github.com/stereolabs/zed-ros-examples/blob/master/examples/zed_rtabmap_example/README.md and it seems to work properly:  Maybe rtabmap node is not working for some reasons, are there errors on terminal? cheers, Mathieu |

|

you just tried the example without doing any changes in config files or other areas?

Are you using Ubuntu 18 or 20 ? |

|

Administrator

|

Yeah, I downloaded this repo "as is": https://github.com/stereolabs/zed-ros-examples

Ubuntu 20.04 on a laptop. |

|

After reinstalling OS (tried both Ubuntu 18 and 20) I was finally able to make it work.

Am I correct to say that fused cloud in only visible in rtabmap gui (rviz gui only shows the point cloud as seen by camera at any one time, not the fused one). What is the meaning of mapping/mapping_enabled parameter (which is set to false as default) ? My final goal is not navigation, but get a fused point cloud with overlaid pictures taken by camera. Then I need to do some measurement or other kind of postprocessing on the 3d map obtained during scanning. |

|

Administrator

|

In rviz, you can also have the fused point clouds like in rtabmapviz, using the rtabmap_ros/MapCloud plugin.

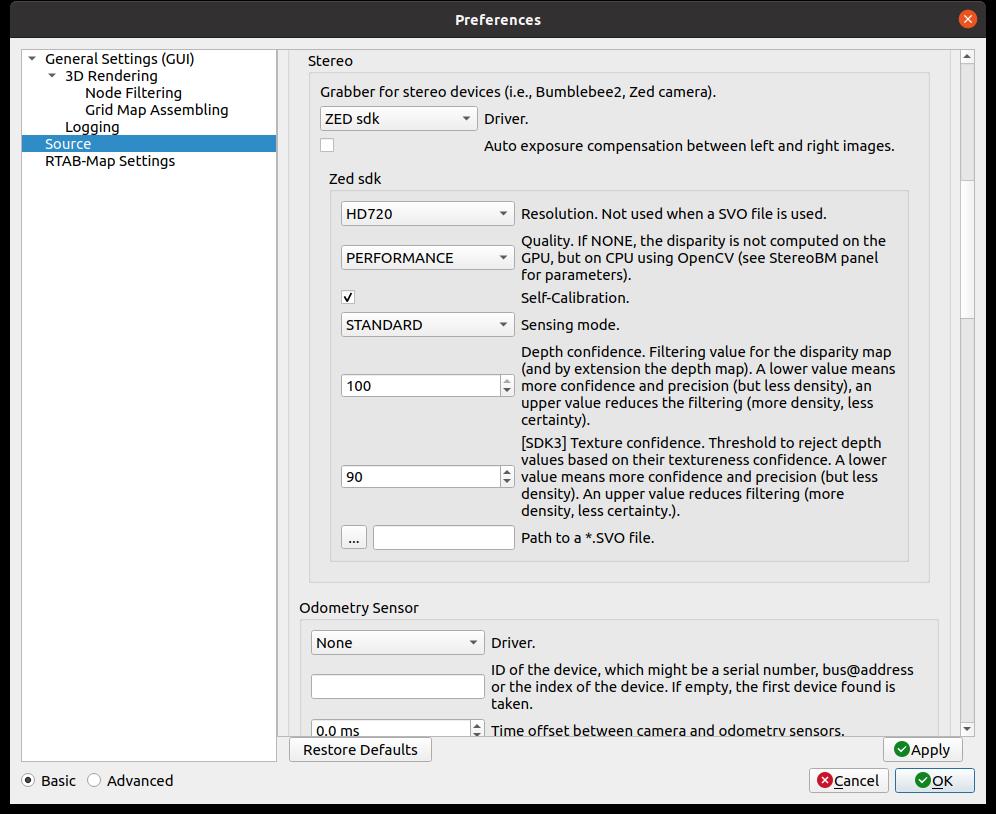

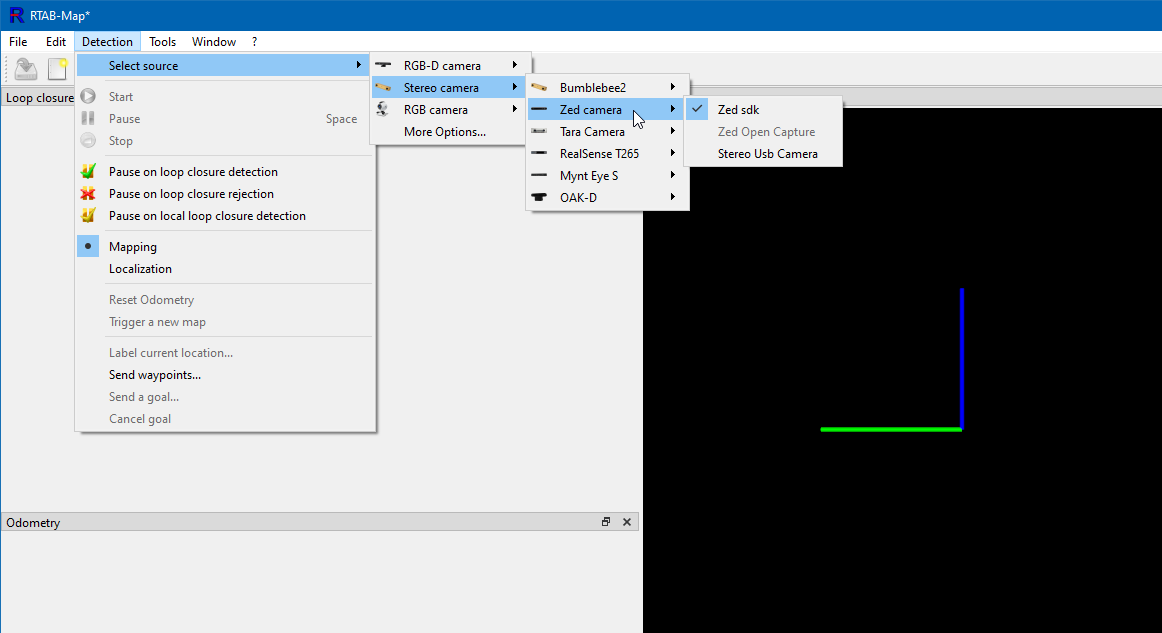

Not sure which node you are referring for those mapping/mapping_enabled parameters. If it is only scanning with zed camera using rtabmap, it could be simpler to build rtabmap library with zed support, and launch it with rtabmap standalone (or even use the windows binaries here with CUDA/zed support to avoid recompiling). Otherwise under ros, you may look at those examples instead: http://wiki.ros.org/rtabmap_ros/Tutorials/HandHeldMapping The zed rtabmap example seems more tuned for robot navigation (for which an occupancy grid has to be created). cheers, Mathieu |

|

Do I need to use absolutely ZED SDK 3.6.5 and Cuda 11.5 to be able to run

RTABMap-0.20.16-win64-cuda11.5-zed3.6.5 As I have ZED SDK 3.7 in my machine at the moment. Thank you |

|

I uninstalled zed sdk 3.7 and installed sdk 3.6.5, then relaunched rtabmap and select zed sdk as source, but nothing happens.

ZED SDK explorer, sensor viewer and others work fine.

|

|

Administrator

|

When you press "New database" button, then "Play" button after selecting zed sdk, are there errors?

EDIT: not sure if zed 3.7 installed will work for with 3.6.5, I assume it would be but I didn't tested it yet. |

|

I reset the parameters and reinstalled sdk, now when click play I can see live and 3d map building up....

however I see many messages of loop closure rejected, even if moving the camera slowly. The resulting point cloud is pretty bad. Any recommendation on setting parameters in preferences to have the most accurate point cloud? |

«

Return to Official RTAB-Map Forum

|

1 view|%1 views

| Free forum by Nabble | Edit this page |